Chapter 2: Reasoning About Code#

In Chapter 1, we implemented a working 1-D heat equation solver. It runs, but it’s fragile, opaque, and hard to test or extend.

That’s a common situation in scientific computing:

write some code, see if it runs, fix what breaks, repeat.

It gets results fast, but the code becomes hard to understand, maintain, or extend.

Step back: How can we do better?

The key is to design intentionally:

by thinking through the structure before diving into code.

An upfront design exercise is valuable:

it clarifies purpose,

reveals assumptions,

identifies the main abstractions we’ll need.

But this isn’t Big Design Up Front. We start with a lightweight design then refine through an iterative process.

Goal: surface intent early, stay flexible as understanding grows.

Managing complexity via decomposition

Break the problem into smaller, meaningful pieces.

How?

Use abstraction to identify key concepts.

Use those abstractions to guide decomposition.

Write specifications for each piece: clear, testable descriptions of what each piece should do.

The four iterative steps:

Abstraction: Identify the concepts that matter.

Decomposition: Organize the code around those abstractions.

Specification: For each piece, write down what it should do, in a way that’s precise and testable.

Implementation: Write code that satisfies the specifications.

It’s a cycle, and not a one-time process. As you learn:

Refine your abstractions and decomposition.

Update specifications as understanding deepens.

Implement and test iteratively.

1. Abstraction#

Abstraction is deciding what matters and what doesn’t. A good software design is based on the right set of abstractions. As such, we start our design and development process by exploring abstractions.

This is a creative step, where we explore different ways to represent the system and its components.

In scientific computing, this step isn’t given enough attention, but it is crucial:

What matters is the fundamental structure of the design. If you get it wrong, there is no amount of bug fixing and refactoring that will produce a reliable, maintainable, and usable system - D. Jackson. The essence of software. (2021)

As scientists and engineers, we are already accustomed to abstraction: we model complex systems by removing unnecessary details and breaking problems into components.

A natural concern: Aren’t most bugs caused by subtle low-level issues?

Yes, but many “low-level” bugs are symptoms of unclear high-level intent. When top-level responsibilities are muddled, they trickle down into brittle, error-prone implementations. Abstraction pays off by:

clarifying intent

making low-level details easier to manage.

Procedural, Data, and Functional Abstractions#

In software design, there are several complementary ways to capture and manage complexity:

Procedural abstraction: defining procedures (functions or methods) that encapsulate behavior.

Data abstraction: defining data structures that encapsulate state and behavior (think object-oriented design).

Functional abstraction: using pure functions that operate on immutable data.

These forms aren’t mutually exclusive. But in this tutorial, we’ll mostly rely on procedural abstraction, since it’s simpler and fits the style of a small solver like the 1-D heat equation. The reasoning principles apply equally well across all abstraction styles.

2. Decomposition#

If abstraction helps us decide what matters, decomposition helps us decide how to organize it.

We want decomposition to:

Isolate concerns and manage complexity

Facilitate testing and reasoning

Improve maintainability and extensibility

Forms of decomposition include functions/methods (procedures), classes, and modules.

In the case of procedural abstraction, guiding principles in breaking a computation into procedures are:

Each procedure should have a single, well-defined purpose.

Procedures should be general: avoid hard-coding specific values or assumptions.

Each procedure should be as simple as possible, but no simpler:

“A good check for simplicity is to give the procedure a name that describes its purpose. If it is difficult to think of a name, there may be a problem with the procedure.” -Liskov

3. Specification: Writing Down Intent#

Once we have modular pieces, we need to say what each one should do.

A specification is a precise statement of what software should do, independent of how it does it.

Specifications can range from informal descriptions in plain English to fully formal mathematical models.

In this tutorial, we adopt a lightweight, practical style of specification that combines:

type annotations: to capture data types and interfaces,

assertions: statements about what must hold before, during, or after execution.

Assertions can take many forms:

Function-level contracts (preconditions and postconditions),

Semantic assertions that encode domain knowledge,

Computational properties.

Some of these belong inside the code (e.g., preconditions that guard against invalid inputs), while others live adjacent to the code, where they can be checked in test suites. Some are local to a function, while others can span multiple functions or modules.

Where to specify assertions?

Place each assertions where it’s easiest to maintain and run:

Inline for cheap, safety-critical checks (e.g., preconditions regarding input validity, invariants that must hold during execution, postconditions that check outputs).

Alongside the code (e.g., small boolean functions in the same module/class) for local semantic checks.

In dedicated spec modules for broader properties that span multiple components, or are common to many components.

Regardless of where they live, specifications serve the same role:

to make intent explicit and testable.

This flexible approach balances practicality and rigor:

It guides implementation by making expectations explicit.

It supports reasoning and testing by expressing scientific or computational facts as executable checks, placed as close as practical to the code they relate to.

It bridges levels of rigor, from quick runtime guards to property-based testing and formal verification.

Example:#

Below simple function exemplifies our practical approach to specification:

def div(x: float, y: float) -> float: # Type annotations for inputs and output

assert y != 0 # P (precondition)

res = x / y # code (implementation)

assert res * y == x # Q (postcondition)

return res

div(4.0, 2.0)

2.0

Type annotations specify that

divtakes two floats and returns a float.The assertions specify contracts, i.e., pre- and postconditions.

Historically, preconditions and postconditions are often denoted P and Q, respectively, as in Hoare logic:

{P} C {Q}

where C is a command (procedure).

Exercise 2.1:#

Can you find inputs to div where this function fails the postcondition even when the precondition is satisfied?

# div(?, ?)

Answer: One possible input is f(7, 25). The precondition y != 0 is satisfied, but the postcondition res * y == x fails due to floating-point rounding error.

div(7, 25)

---------------------------------------------------------------------------

AssertionError Traceback (most recent call last)

Cell In[4], line 1

----> 1 div(7, 25)

Cell In[1], line 4, in div(x, y)

2 assert y != 0 # P (precondition)

3 res = x / y # code (implementation)

----> 4 assert res * y == x # Q (postcondition)

5 return res

AssertionError:

Accounting for reality:

Floating-point arithmetic isn’t exact.

We can relax postconditions using approximate equality:

from pytest import approx

def div(x: float, y: float) -> float: # Type annotations for inputs and output

assert y != 0 # P (precondition)

res = x / y # code (implementation)

assert res * y == approx(x) # Q (postcondition)

return res

div(7, 25)

0.28

Takeaway: Specifications should reflect computational realities (e.g., floating-point).

4. Implementation: Writing Code that Meets Specifications#

With abstractions identified, pieces decomposed, and specifications written, we can implement code that satisfies them.

This shifts our mindset: instead of starting with lines of code and hoping they work, we start with clear expectations and then fill in implementations that must meet them.

We can then use testing and verification to ensure our implementations meet their specifications instantly.

Heat Equation Solver, Revisited#

In Chapter 1, we wrote a quick, monolithic solver for the 1-D heat equation. It worked, but it mixed boundary conditions, flux calculations, and time-stepping into a single opaque function.

Now we’ll redesign it systematically, using the four-step, iterative process:

Abstraction → Decomposition → Specification → Implementation.

Step 1 — Abstraction#

At this stage, we define the what of our problem, i.e., the key concepts we need to model:

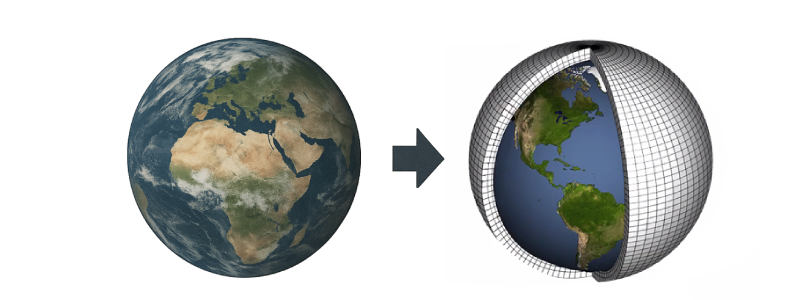

We want to advance a 1-D temperature field \(u_i\) on a uniform grid using a conservative finite volume method:

\( \qquad u_i^{n+1} = u_i^{n} + \Delta t \times \, div(F_i), \qquad div(F_i) = \frac{F_{i} - F_{i+1}}{\Delta x}, \qquad F_i = \begin{cases} q_L & i = 0, \\[6pt] -\kappa \dfrac{u_i - u_{i-1}}{\Delta x} & 1 \leq i \leq N-1, \\[10pt] q_R & i = N. \end{cases} \)

From this description, we can extract the essential concepts that our software must represent:

Mesh: describes the geometry of the domain (cell size \(\Delta x\), number of cells \(N\)).

Cell fields (\(u_i\), \(div(F_i)\)): quantities stored at cell centers.

Face fields (\(F_i\)): quantities stored at cell faces.

Procedures to perform the time-stepping of the heat equation.

These abstractions define what the system consists of, not how it will be computed. They form the conceptual backbone of the solver—independent of data structures, programming language, or numerical optimization choices.

Step 2 – Decomposition#

With our abstractions identified, we now decide how to organize the work. We’ll use procedural abstraction: small, single-purpose functions operating on simple data containers.

The main data structures representing these abstractions are:

mesh: discrete representation of the computational domain (grid spacing, number of cells).

cell field: a vector storing cell-centered values (e.g., temperature, tendencies).

face field: a vector storing face-centered values (e.g., diffusive fluxes).

And for convenience, we’ll define a simple type alias for 1-D vectors:

vec = list[float]

Next, let’s define a mesh data class, i.e., a simple data structure intended for storing data without much additional functionality. Since our approach is procedural, we won’t rely much on object-oriented programming features beyond this simple data class.

from dataclasses import dataclass

@dataclass

class Mesh:

"""Uniform 1-D mesh."""

dx: float # cell size

N: int # number of cells

def cell_field(self) -> vec:

return [0.0] * self.N

def face_field(self) -> vec:

return [0.0] * (self.N + 1)

Notice the helper methods cell_field and face_field that create zero-initialized fields of the appropriate size based on the mesh. This makes correct sizing trivial and prevents off-by-one errors.

Procedures:#

Next, we define the procedures that implement our abstractions. Following the principles of abstraction and decomposition:

Each procedure should have a single, clear purpose.

Procedures should be general: avoid narrowing to specific variables, values, or assumptions.

Each procedure should be as simple as possible, but no simpler.

Based on these principles, we can define the following general procedures:

apply_bc(f_out, bc): apply boundary conditions to face fluxes.diffusive_flux(f_out, c, kappa, dx): compute interior fluxes.divergence(c_out, f, dx): compute divergence of face quantities.

** Naming Convention: **

For generalizable procedures, we don’t want to hard-code specific variable names (e.g., temperature, heat_flux).

Instead, we use:

fto denote face fields.cto denote cell fields.

Additionally, we use _out suffixes to indicate output arguments that will be modified in place, and _inout for arguments that are both input and output.

This makes the procedures general and reusable. If we were to compute the diffusive fluxes of a different quantity (e.g., concentration), we could reuse the same diffusive_flux procedure without modification. (This is called abstraction by parameterization.)

In addition to the above general procedures, we need two more, specific to the heat equation solver. For these, the argument names are more specific to clarify their purpose:

step_heat_eqn(u_inout, kappa, dt, mesh, bc): advance one time step.solve_heat_eqn(u0, kappa, dt, nt, dx, bc): orchestrate multiple steps.

Why this decomposition works:

Clarity: Each function does one thing and does it well.

Reusability: Generic flux and divergence routines can be reused for other physical fields.

Testability: Small, side-effect-controlled procedures are easy to test and verify.

Robustness: Internals can evolve (vectorization, GPU backends, higher-order schemes) without breaking code that depends only on their interfaces.

Step 3 – Specification#

Having identified the pieces of our solver, the next step is to make their intent explicit. A specification is a precise statement of what a function or module should accomplish. Not how it does so, but what must hold true if it’s correct.

Recall, our practical approach to specification combines:

type annotations: to capture data types and interfaces,

assertions: statements about what must hold before, during, or after execution.

Assertions can take many forms:

Function-level contracts (preconditions and postconditions),

Semantic assertions that encode mathematical or physical relationships,

Computational properties.

Some of these checks belong inside the code (for local or safety-critical guarantees), while others live adjacent to it, where they are (re)used in tests and analysis tools.

Core API and Type Annotations#

Before writing any code, we begin by specifying a core API: the set of functions that define what the solver does. At this stage, we’re not implementing behavior, but defining interfaces that describe expectations.

This follows the design principle:

Program to an interface, not an implementation. - Gamma et al. Design Patterns (1994)

Our functions expose clear, minimal interfaces: they declare what inputs and outputs exist, but hide how results are computed. This separation makes the codebase easier to reason about, relate, and structure.

# Procedures:

def apply_bc(f_out: vec, bc: vec) -> None:

"""Apply BCs by overriding first and last face quantities (f_out)."""

...

def diffusive_flux(f_out: vec, c: vec, kappa: float, dx: float) -> None:

"""Given a cell field (c), compute the diffusive flux (f_out)."""

...

def divergence(c_out: vec, f: vec, dx: float) -> None:

"""Compute the divergence of face quantities (f) and store in (c_out)."""

...

def step_heat_eqn(u_inout: vec, kappa: float, dt: float, mesh: Mesh, bc: vec):

"""Advance cell field u by one time step using explicit Euler method."""

...

def solve_heat_eqn(u0: vec, kappa: float, dt: float, nt: int, dx: float, bc: vec) -> vec:

"""Orchestrate nt steps over cell field u."""

...

At this point, our type annotations and core API form the first layer of specification.

Preconditions and Postconditions#

Next, we enrich the interfaces with contracts: conditions that must hold before and after execution.

Take divergence function that computes the divergence of face fluxes:

\(\qquad \nabla \cdot F = \frac{F_{i} - F_{i+1}}{\Delta x} \qquad\)

Two essential preconditions emerge from this formulation:

The mesh spacing

dxmust be positive.The vector \(\nabla \cdot F\) (passed as

c) and the vectorF(passed asf) must have compatible sizes: specifically,len(c) == len(f) - 1.

def divergence(c_out: vec, f: vec, dx: float) -> None:

"""Compute the divergence of face quantities (f) and store in (c_out)."""

assert len(c_out) == len(f) - 1, "Size mismatch"

assert dx > 0, "Non-positive dx"

...

These are simple, local guarantees that keep the interface trustworthy.

Semantic Assertions#

Beyond structural correctness, we can express scientific, mathematical, or computational facts that are expected to hold true.

For example, the Telescoping Property of Divergence: Total divergence equals net flux through boundaries.

We can encode this property as a function that returns True if the property holds, and False otherwise:

def telescoping(c: vec, f: vec, dx: float) -> bool:

"""Check the finite volume telescoping property."""

total_divergence = sum(c) * dx

boundary_flux = f[0] - f[-1]

return total_divergence == approx(boundary_flux)

We can then add this check as a postcondition in the divergence function:

def divergence(c_out: vec, f: vec, dx: float) -> None:

"""Compute the divergence of face quantities (f) and store in (c_out)."""

assert len(c_out) == len(f) - 1, "Size mismatch"

assert dx > 0, "Non-positive dx"

...

assert telescoping(c_out, f, dx), "Telescoping property violated"

Or, alternatively, rather than embedding this assertion directly inside the function, we can include it in a test function that’s part of our test suite.

Exercise 2.2:#

One of the key preconditions for the Euler (FTCS) scheme is the stability condition as given below:

\(\qquad \Delta t \leq \frac{\Delta x^2}{2 \kappa} \qquad\)

Implement a function stability_condition(...) that checks this condition. Which

function(s) would you add this as a precondition to?

def stability_condition(dt: float, dx: float, kappa: float) -> bool:

"""Check the stability condition for the explicit Euler scheme."""

...

Answer:

Below is a possible implementation of the is_stable function:

def stability_condition(dt: float, dx: float, kappa: float) -> bool:

"""Check the stability condition for the explicit Euler scheme."""

return dt <= (dx ** 2) / (2 * kappa)

The function stability_condition can be added as a precondition to the solve_heat_eqn function, as it ensures that the time step dt is appropriate for the spatial discretization dx and the diffusion coefficient kappa, which is crucial for the stability of the explicit Euler scheme used in the heat equation solver.

Exercise 2.3:#

Conservation of total heat can be expressed as:

Special Cases:

If

q_L==q_R== 0, the mean stays constant.If

q_Landq_Rare constant, the temperature profile converges to a linear steady state.

Based on this formulation, implement a checker that verifies conservation between two successive states. Decide where to use it:

as a postcondition after one time step,

as a loop invariant inside the time-stepping loop, or

adjacent to the code in the test suite (recommended), verifying conservation after each step.

Hint: consider floating-point tolerance when comparing totals.

def heat_is_conserved(u_old: vec, u_new: vec, dt: float, dx: float, F: vec) -> bool:

"""Check if heat is conserved."""

...

Answer:

Below is one possible implementation of the heat conservation check.

This condition can be incorporated in several ways: as a postcondition in the step_heat_eqn function,

as a loop invariant within the time-stepping loop in solve_heat_eqn,

or, preferably, as an adjacent specification in the test suite to verify conservation.

Keep inexpensive, local guards inline, and place broader checks like this one nearby in tests, so they run regularly without cluttering the solver’s core logic or performance. This approach keeps the implementation lean and flexible while ensuring that the conservation property remains explicit, verifiable, and routinely checked.

def heat_is_conserved(u_old: vec, u_new: vec, dt: float, dx: float, F: vec) -> bool:

"""Check if heat is conserved."""

lhs = sum(u_new) * dx

rhs = sum(u_old) * dx + dt * (F[0] - F[-1])

return lhs == approx(rhs)

Step 4 – Implementation#

With our abstractions defined, the problem decomposed, and specifications in place, we can now write an implementation that satisfies them.

In this step, we bring together all the functions from the decomposition stage into a single module, heat1d.py.

Saving the code as a standalone file makes it easy to import and reuse in the following chapters.

We also include the previously defined vec type alias and the Mesh data class for completeness.

%%writefile heat1d.py

from dataclasses import dataclass

from pytest import approx

vec = list[float]

@dataclass

class Mesh:

"""Uniform 1-D mesh."""

dx: float # cell size

N: int # number of cells

def cell_field(self) -> vec:

return [0.0] * self.N

def face_field(self) -> vec:

return [0.0] * (self.N + 1)

def apply_bc(f_out: vec, bc: vec) -> None:

"""Apply BCs by overriding first and last face quantities (f_out)."""

assert len(f_out) > 1, "face field size too small"

assert len(bc) == 2, "bc must be of size 2"

f_out[0], f_out[-1] = bc[0], bc[1]

def diffusive_flux(f_out: vec, c: vec, kappa: float, dx: float) -> None:

"""Given a cell field (c), compute the diffusive flux (f_out)."""

assert len(f_out) == len(c) + 1, "Size mismatch"

assert dx > 0 and kappa > 0, "Non-positive dx or kappa"

for i in range(1, len(f_out) - 1):

f_out[i] = -kappa * (c[i] - c[i-1]) / dx

def divergence(c_out: vec, f: vec, dx: float) -> None:

"""Compute the divergence of face quantities (f) and store in (c_out)."""

assert len(c_out) == len(f) - 1, "Size mismatch"

assert dx > 0, "Non-positive dx"

for i in range(len(c_out)):

c_out[i] = (f[i] - f[i+1]) / dx

def step_heat_eqn(u_inout: vec, kappa: float, dt: float, mesh: Mesh, bc: vec):

"""Advance cell field u by one time step using explicit Euler method."""

assert dt > 0, "Non-positive dt"

assert mesh.N == len(u_inout), "Size mismatch"

F = mesh.face_field()

divF = mesh.cell_field()

apply_bc(F, bc)

diffusive_flux(F, u_inout, kappa, mesh.dx)

divergence(divF, F, mesh.dx)

for i in range(mesh.N):

u_inout[i] += dt * divF[i]

def solve_heat_eqn(u0: vec, kappa: float, dt: float, nt: int, dx: float, bc: vec) -> vec:

"""Orchestrate nt steps over cell field u."""

assert nt > 0, "Number of time steps must be positive"

assert dt <= (dx ** 2) / (2 * kappa), "Stability condition not met"

mesh = Mesh(dx, N=len(u0))

u = u0.copy()

for _ in range(nt):

step_heat_eqn(u, kappa, dt, mesh, bc)

return u

Overwriting heat1d.py

Now let’s import the solver and run it:

from heat1d import solve_heat_eqn

solve_heat_eqn(

u0 = [0.0, 100.0, 0.0],

kappa = 0.1,

dt = 1.0,

nt = 1000,

dx = 1.0,

bc = [0.0, 0.0]

)

[33.333333333333314, 33.33333333333333, 33.333333333333314]

What we just did#

We now have a solver whose structure and reasoning are explicit. It’s modular: each function has a single purpose over well-defined data.

We did write a lot more lines, because we added:

Isolation of concerns (apply_bc, flux, divergence, step, solve) instead of one monolith

Type annotations

Executable contracts (pre/postconditions, invariants, semantic assertions)

Lightweight data types (Mesh, vec)

In real applications, this “ceremony” is a small fraction of total code: The heavy lifting lives in numerical computations, I/O, parallelization, physics, etc.. The scaffolding pays off by reducing change amplification and cognitive load:

Safer changes (localized edits)

Better testing (unit, property, etc.)

Faster debugging (failures point to where)

Reusability (shared abstractions)

Looking Ahead#

In Chapter 3, we’ll take the next step:

turning these specifications into unit tests that run automatically,

giving us rapid feedback and confidence as our code evolves.

Foundations and Influences#

For readers interested in the broader theoretical background, Appendix A traces the roots of our approach in the wider history of software design and development. It highlights related methodologies and advocates, such as Bertrand Meyer’s Design by Contract, Barbara Liskov’s work on abstraction and behavioral subtyping, Daniel Jackson’s model-based design, John Ousterhout’s abstraction-driven philosophy, Edsger Dijkstra’s reasoning about programs, and Niklaus Wirth’s principle of stepwise refinement, all of which shaped the foundations of our approach.

Connection to Test-Driven Development#

TDD and related test-first methods emphasize writing tests before code. This practice can be powerful for feature-driven projects, but in scientific computing we often don’t yet know what behavior to test. We’re still discovering the right abstractions.

As such, we begin with abstraction-driven design: sketching clear concepts and contracts, then using tests and experiments as feedback tools to refine those ideas.

In other words:

TDD focuses on getting a feature to work.

We focus on discovering the right design for the problem.

As John Ousterhout argues, good design emerges from reasoning about abstractions. Tests then validate and illuminate those abstractions, not drive them.

R3Sw tutorial by Alper Altuntas (NSF NCAR). Sponsored by the BSSw Fellowship Program. © 2025.

Cite as: Alper Altuntas, Deepak Cherian, Adrianna Foster, Manish Venumuddula, and Helen Kershaw. (2025). “Rigor and Reasoning in Research Software (R3Sw) Tutorial.” Retrieved from https://www.alperaltuntas.com/R3Sw